|

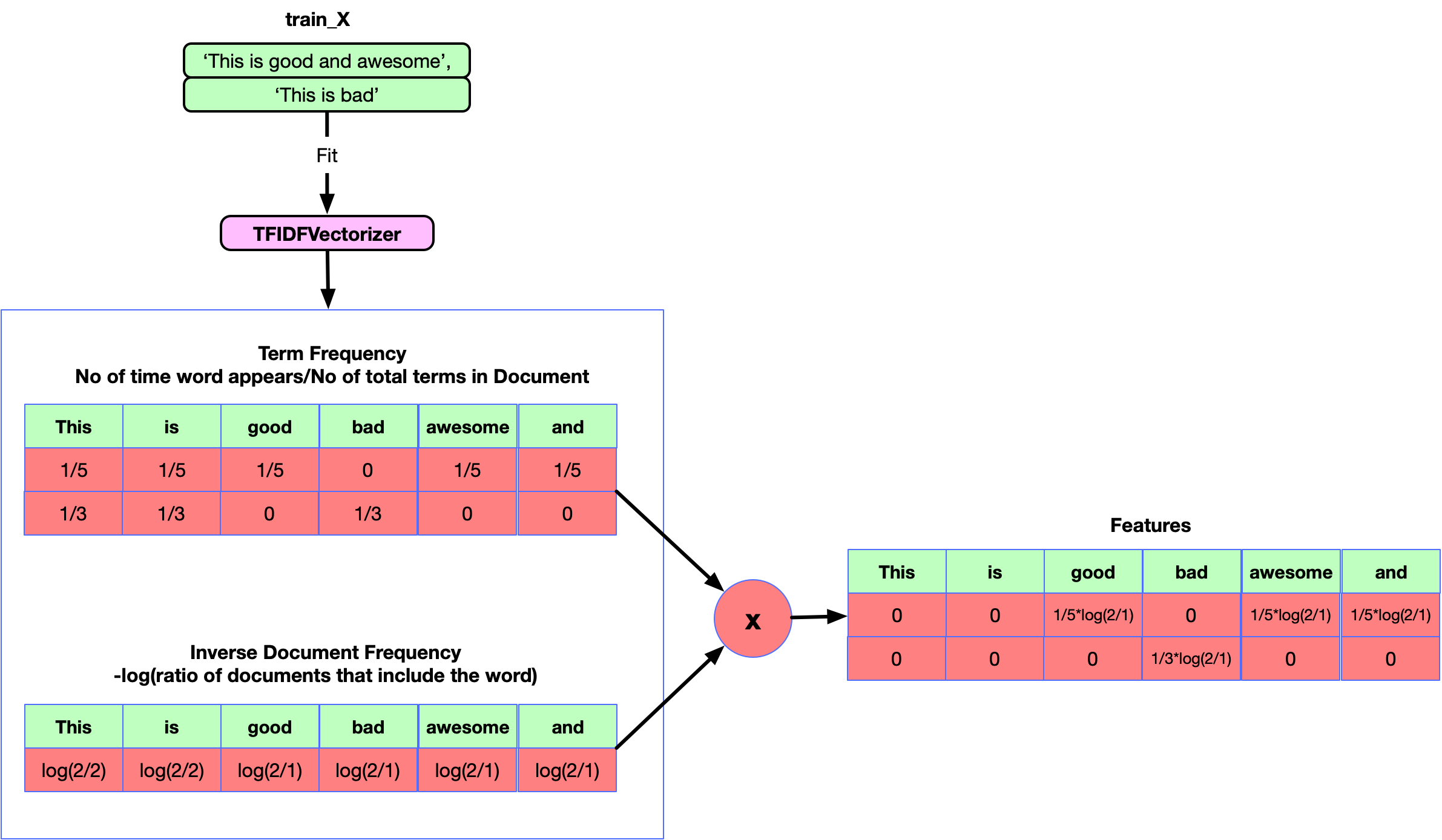

SGDClassifier has a penalty parameter alpha and configurable lossĪnd penalty terms in the objective function (see the module documentation, Classifiers tend to have many parameters as well Į.g., MultinomialNB includes a smoothing parameter alpha and We’ve already encountered some parameters such as use_idf in the On atheism and Christianity are more often confused for one another than target, predicted ) array(,, , ])Īs expected the confusion matrix shows that posts from the newsgroups > from sklearn import metrics > print ( metrics. In CountVectorizer, which builds a dictionary of features and

Text preprocessing, tokenizing and filtering of stopwords are all included Scipy.sparse matrices are data structures that do exactly this,Īnd scikit-learn has built-in support for these structures. Only storing the non-zero parts of the feature vectors in memory. For this reason we say that bags of words are typically Is barely manageable on today’s computers.įortunately, most values in X will be zeros since for a givenĭocument less than a few thousand distinct words will be If n_samples = 10000, storing X as a NumPy array of typeįloat32 would require 10000 x 100000 x 4 bytes = 4GB in RAM which

The number of distinct words in the corpus: this number is typically

The bags of words representation implies that n_features is #j where j is the index of word w in the dictionary. Word w and store it in X as the value of feature Of the training set (for instance by building a dictionaryįor each document #i, count the number of occurrences of each Assign a fixed integer id to each word occurring in any document

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed